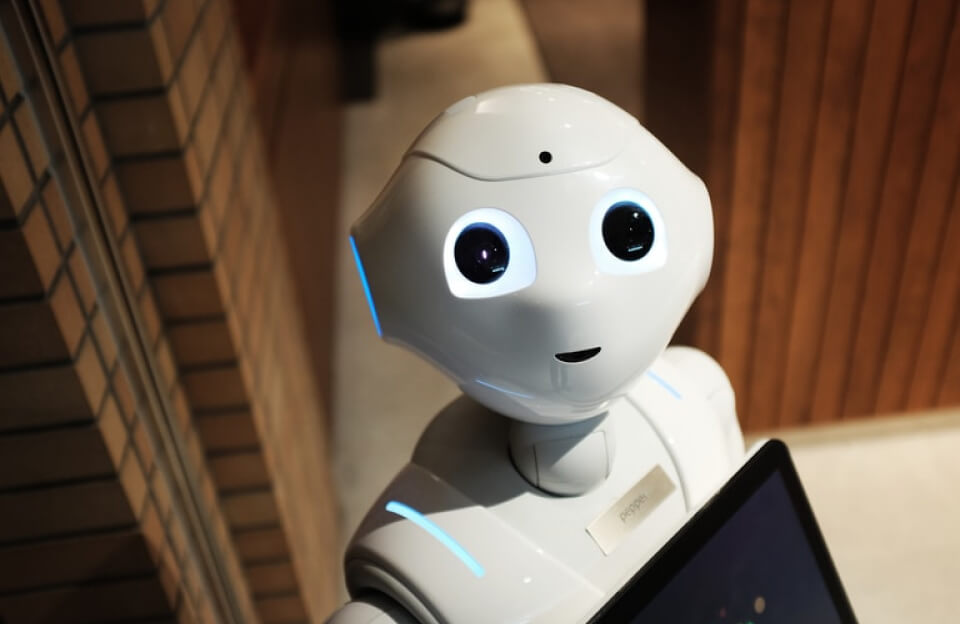

An AI agent is a system that can do more than answer one question. It can remember steps, use tools, and keep working through a task. That sounds powerful, but it also creates more ways to fail.

What makes an agent useful

Good agents need clear rules. They need to know what tools they can use, what information to save, and what to do when something goes wrong.

Testing matters too. Many agent problems are not language problems. They are workflow problems, such as using the wrong tool or repeating a bad step.

The simple building blocks

- Memory, so the system can keep track of the task.

- Tool access, so it can act on the world.

- Testing, so teams can measure success.

- Safety rules, so it stays inside clear limits.

- Recovery steps, so errors do not grow into bigger problems.

When people explain agents in plain terms, they sound less magical and more useful.